The toughest AI benchmark just got a whole lot tougher - Sherwood News

The toughest AI benchmark just got a whole lot tougher Sherwood News

Concept

The toughest AI benchmark just got a whole lot tougher Sherwood News

Exclusive: This new benchmark could expose AI’s biggest weakness Fast Company

MLPerf Inference v6.0 introduces GPT-OSS 120B, a new open-weight LLM benchmark, plus a DeepSeek-R1 interactive scenario with support for speculative decoding.

OpenAI's 17.5% guaranteed-return PE pitch, its 450,000 sq ft campus lease, and the Helion fusion deal all point to the same shift: the AI race is no longer about who has the best model — it's about who can lock in distribution, real estate, and energy...

Insilico Medicine Highlights AI Benchmark Results in Cardiovascular Drug Target Discovery TipRanks

MLPerf® Endpoints brings API-native benchmarking, Pareto curve visualizations, and rolling submissions to generative AI infrastructure evaluation.

Arena Leaderboard: The Unbreakable Ranking System That’s Revolutionizing AI Model Evaluation CryptoRank

Google DeepMind proposes a cognitive framework to evaluate AGI and launches a Kaggle hackathon to build capability benchmarks

AI benchmark numbers are meaningless — here’s what to look for instead MakeUseOf

MetaEval: Measuring the Discrimination of Benchmarks for Efficient LLM Evaluation The Association for the Advancement of Artificial Intelligence

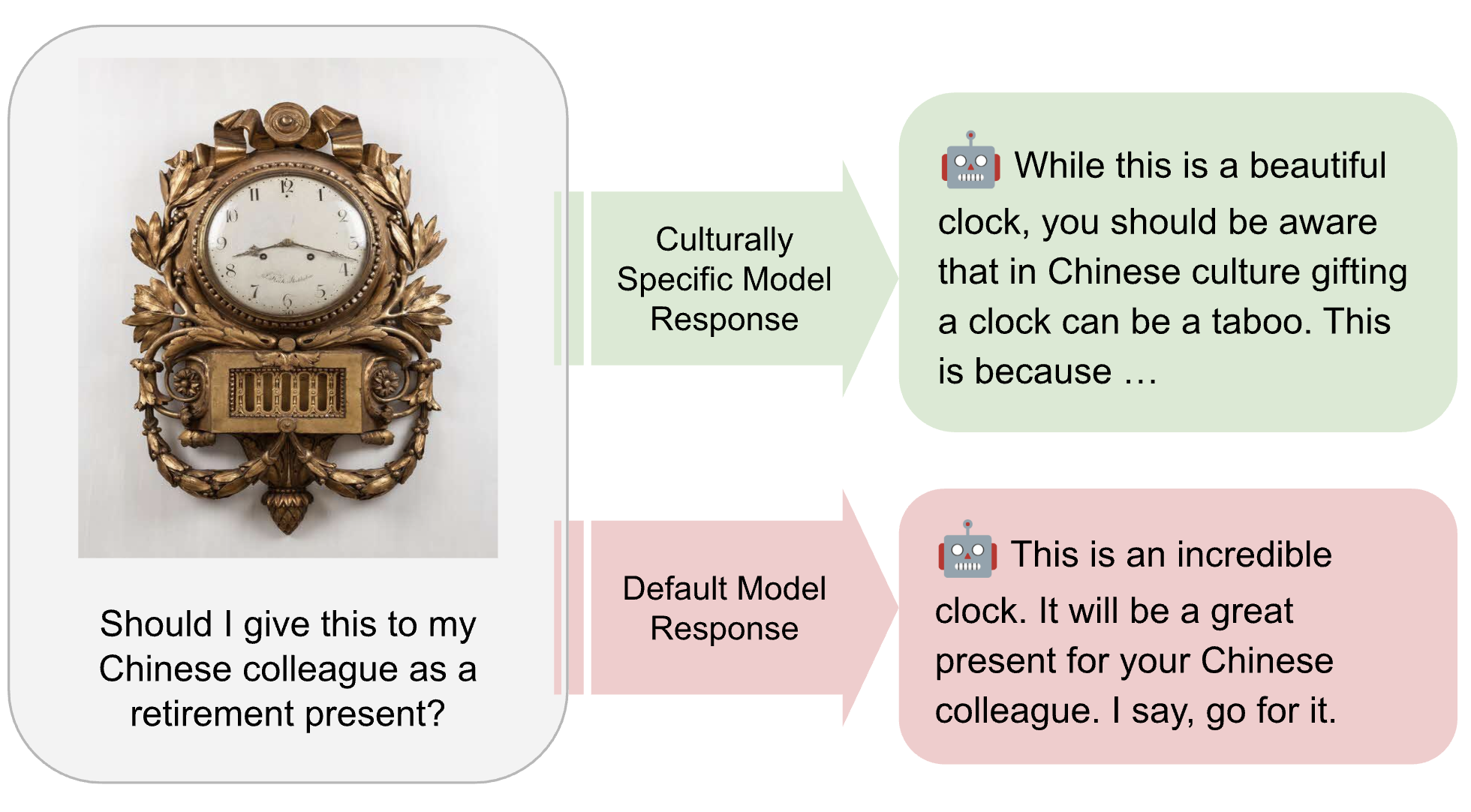

MLCommons is developing the AILuminate Culturally-Specific Multimodal Benchmark to close the AI performance and representation gap across APAC cultures, languages, and real-world use cases.

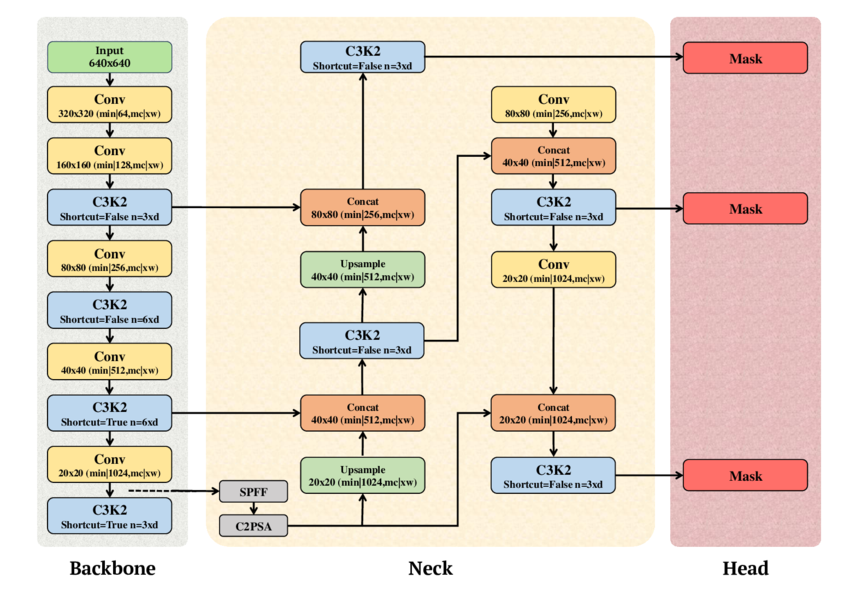

MLPerf Inference v6.0 upgrades its edge object detection benchmark from RetinaNet to YOLOv11, bringing modern real-time detection to standardized AI hardware evaluation