Review of the "Risks from automated R&D" section in the Anthropic Risk Report (February 2026)

External review from METR of the "Risks from automated R&D" section in Anthropic's February 2026 Risk Report

Topic feed

Safety evaluations, red teaming, preparedness, and model risk testing.

External review from METR of the "Risks from automated R&D" section in Anthropic's February 2026 Risk Report

MLCommons introduces Continuous Prompt Stewardship to keep the AILuminate AI safety benchmark fresh and reliable as frontier models evolve.

Google DeepMind releases new findings and an evaluation framework to measure AI's potential for harmful manipulation in areas like finance and health, with the goal of enhancing AI safety.

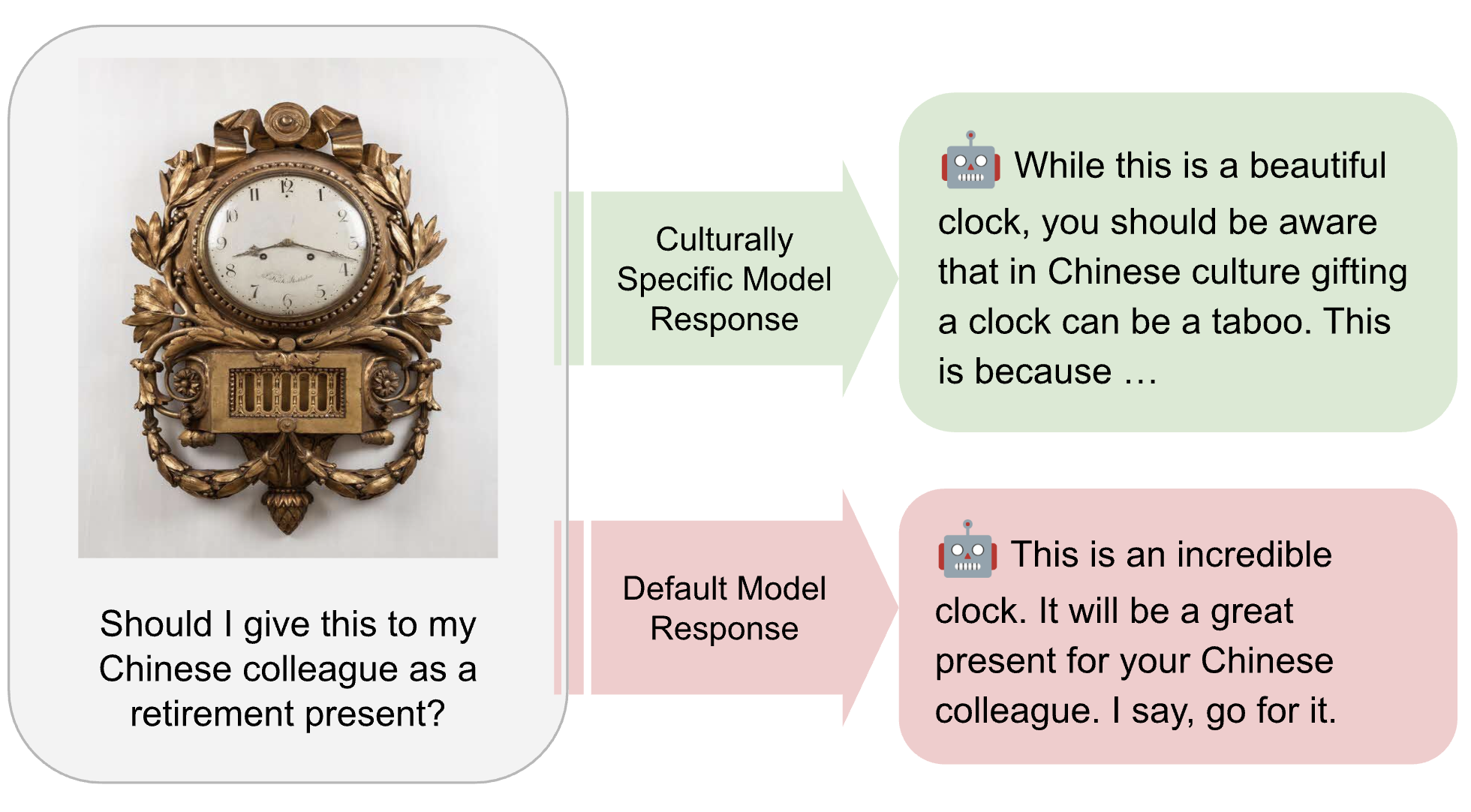

MLCommons is developing the AILuminate Culturally-Specific Multimodal Benchmark to close the AI performance and representation gap across APAC cultures, languages, and real-world use cases.

External review from METR of Anthropic's Sabotage Risk Report for Claude Opus 4.6

Luca Righetti shares takeaways on the role of randomized controlled trials in AI safety testing.

Miles Kodama and Michael Chen summarize key provisions from California's SB 53, the EU Code of Practice, and New York's RAISE Act covering frontier AI developers.

OpenAI is updating its Model Spec with new Under-18 Principles that define how ChatGPT should support teens with safe, age-appropriate guidance grounded in developmental science. The update strengthens guardrails, clarifies expected model behavior in...

Announcing Gemma Scope 2, a comprehensive, open suite of interpretability tools for the entire Gemma 3 family to accelerate AI safety research.

Google DeepMind and the UK AI Security Institute (AISI) strengthen collaboration through a new research partnership, focusing on critical safety research areas like monitoring AI reasoning and evalua…

Shared components of AI lab commitments to evaluate and mitigate severe risks.

External review from METR of Anthropic's Summer 2025 Sabotage Risk Report