Gemma Scope 2: Helping the AI Safety Community Deepen Understanding of Complex Language Model Behavior

Announcing Gemma Scope 2, a comprehensive, open suite of interpretability tools for the entire Gemma 3 family to accelerate AI safety research.

Concept

Announcing Gemma Scope 2, a comprehensive, open suite of interpretability tools for the entire Gemma 3 family to accelerate AI safety research.

Google DeepMind and the UK AI Security Institute (AISI) strengthen collaboration through a new research partnership, focusing on critical safety research areas like monitoring AI reasoning and evalua…

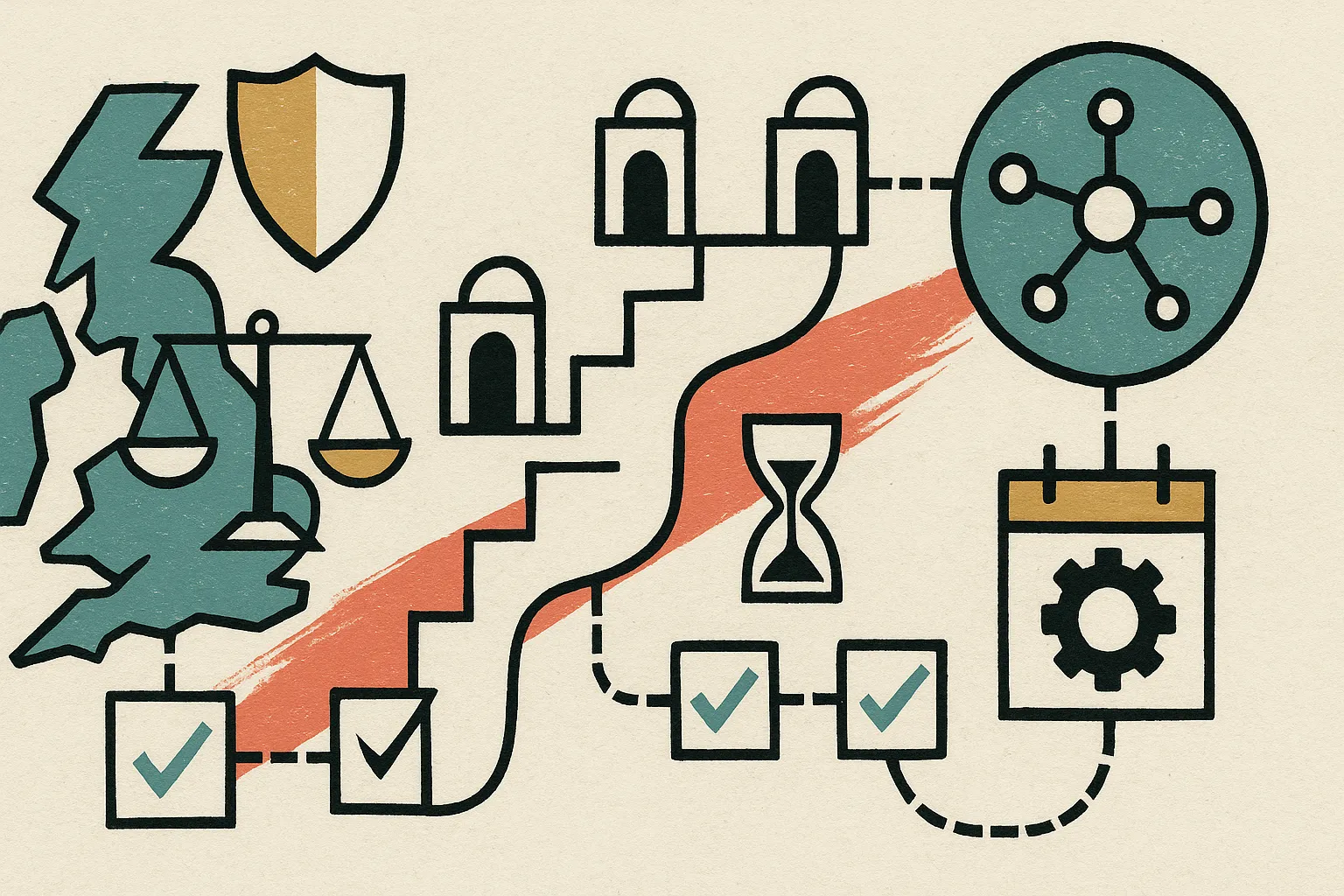

Shared components of AI lab commitments to evaluate and mitigate severe risks.

A practical model for growing AI agent autonomy - levels, controls, and KPIs - grounded in risk frameworks (NIST AI RMF, ISO/IEC 42001) and aligned with EU AI Act oversight.

External review from METR of Anthropic's Summer 2025 Sabotage Risk Report

Details on external recommendations from METR for gpt-oss Preparedness experiments and follow-up from OpenAI.

ChatGPT agent System Card: OpenAI’s agentic model unites research, browser automation, and code tools with safeguards under the Preparedness Framework.

Current views on information relevant for visibility into frontier AI risk.

Sharing our updated framework for measuring and protecting against severe harm from frontier AI capabilities.

We’re exploring the frontiers of AGI, prioritizing technical safety, proactive risk assessment, and collaboration with the AI community.

This report outlines the safety work carried out prior to releasing deep research including external red teaming, frontier risk evaluations according to our Preparedness Framework, and an overview of the mitigations we built in to address key risk areas.

What the EU AI Act requires in 2025–2027, how it lines up with the UK ICO's AI & Data Protection Risk Toolkit, and the exact outputs your team should ship.