Standardizing Generative AI Service Evaluation: An API-Centric Benchmarking Approach - MLCommons

MLPerf® Endpoints brings API-native benchmarking, Pareto curve visualizations, and rolling submissions to generative AI infrastructure evaluation.

Topic feed

AI benchmarks, leaderboards, and comparative model testing.

MLPerf® Endpoints brings API-native benchmarking, Pareto curve visualizations, and rolling submissions to generative AI infrastructure evaluation.

Arena Leaderboard: The Unbreakable Ranking System That’s Revolutionizing AI Model Evaluation CryptoRank

Google DeepMind proposes a cognitive framework to evaluate AGI and launches a Kaggle hackathon to build capability benchmarks

AI benchmark numbers are meaningless — here’s what to look for instead MakeUseOf

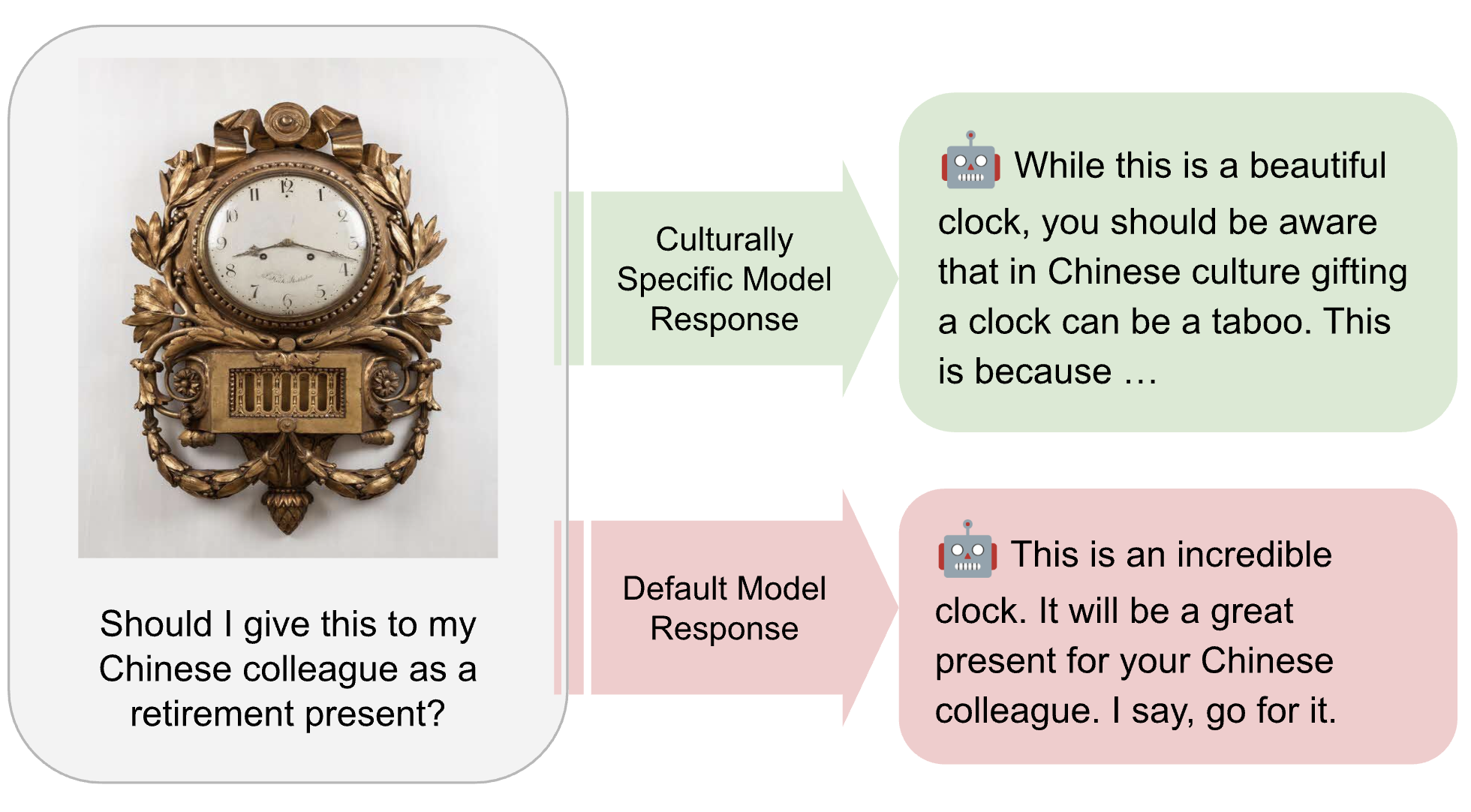

MLCommons is developing the AILuminate Culturally-Specific Multimodal Benchmark to close the AI performance and representation gap across APAC cultures, languages, and real-world use cases.

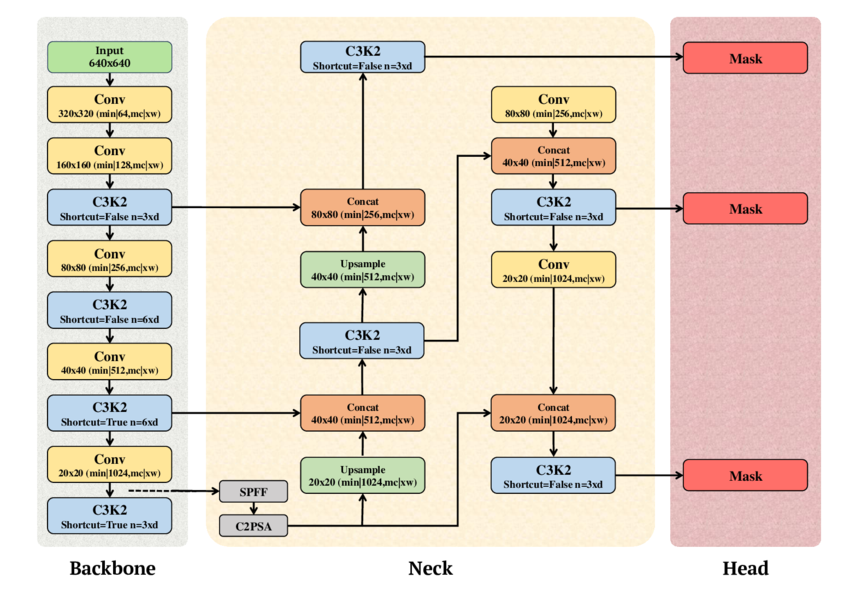

MLPerf Inference v6.0 upgrades its edge object detection benchmark from RetinaNet to YOLOv11, bringing modern real-time detection to standardized AI hardware evaluation

Qwen3.5-9B tops every AI benchmark right now, but that's not how you should pick a model XDA

MiniMax M2.5 Sparks AI Benchmark Fraud Debate AI CERTs

MLCommons introduces the new Text-to-Video benchmark in MLPerf Inference v6.0, based on the Wan2.2-T2V-A14B-Diffusers model and validated using the VBench framework. Learn about the key architectural decisions, including the adoption of the SingleStream...

How Anthropic’s Claude Opus 4.6 Broke Its Own AI Benchmark WinBuzzer

We find that roughly half of test-passing SWE-bench Verified PRs written by recent AI agents would not be merged into main by repo maintainers. A naive interpretation of benchmark scores may lead one to overestimate how useful agents are without more...

Researchers build Humanity’s Last Exam AI benchmark | ETIH EdTech News EdTech Innovation Hub