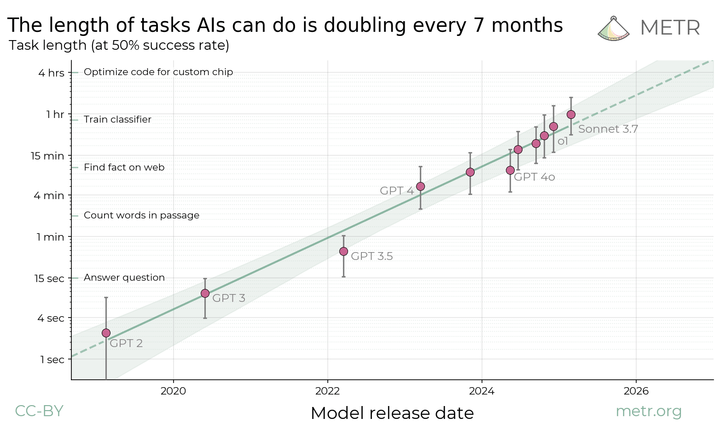

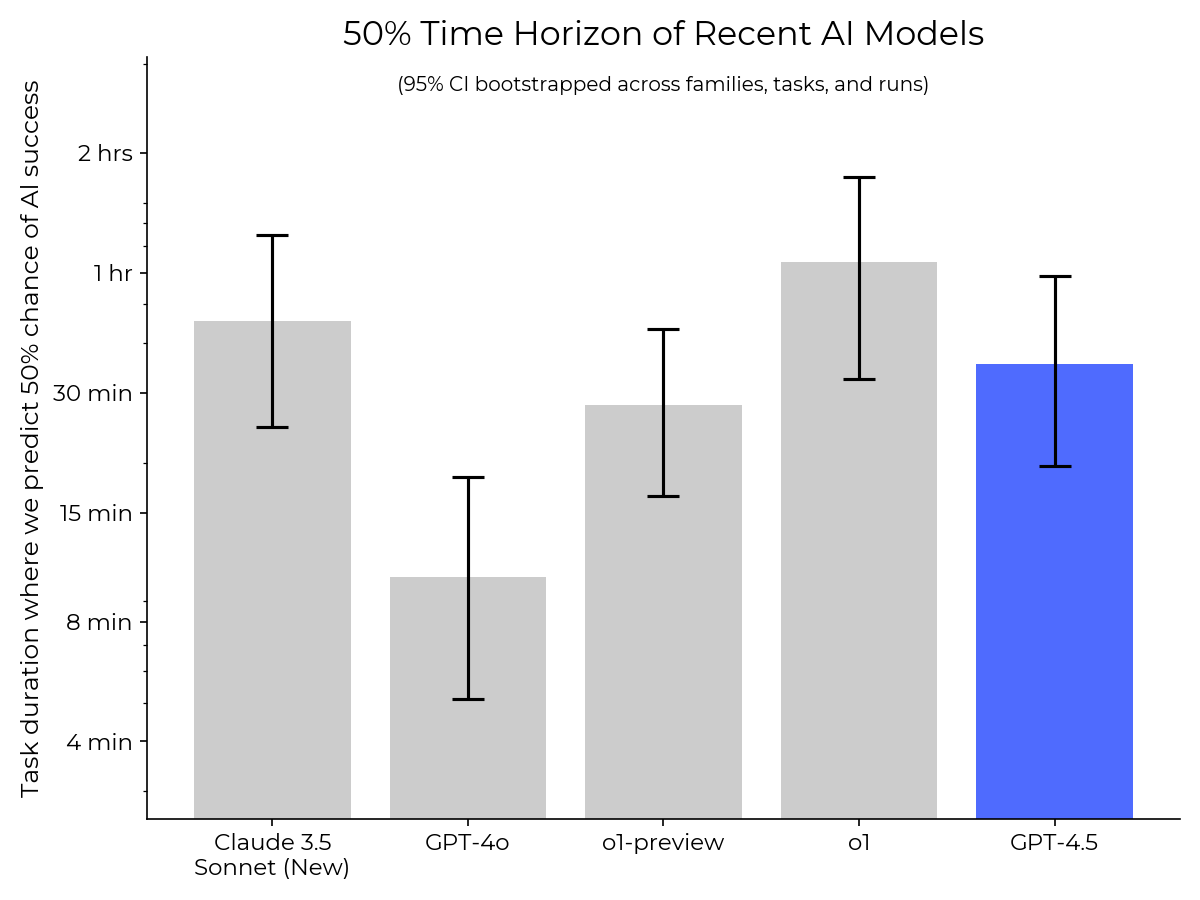

Details about METR's preliminary evaluation of DeepSeek and Qwen models

METR conducted preliminary evaluations of a set of DeepSeek and Qwen models. We found that the level of autonomous capabilities of mid-2025 DeepSeek models is similar to the level of capabilities of frontier models from late 2024.