Details about METR's preliminary evaluation of DeepSeek-R1

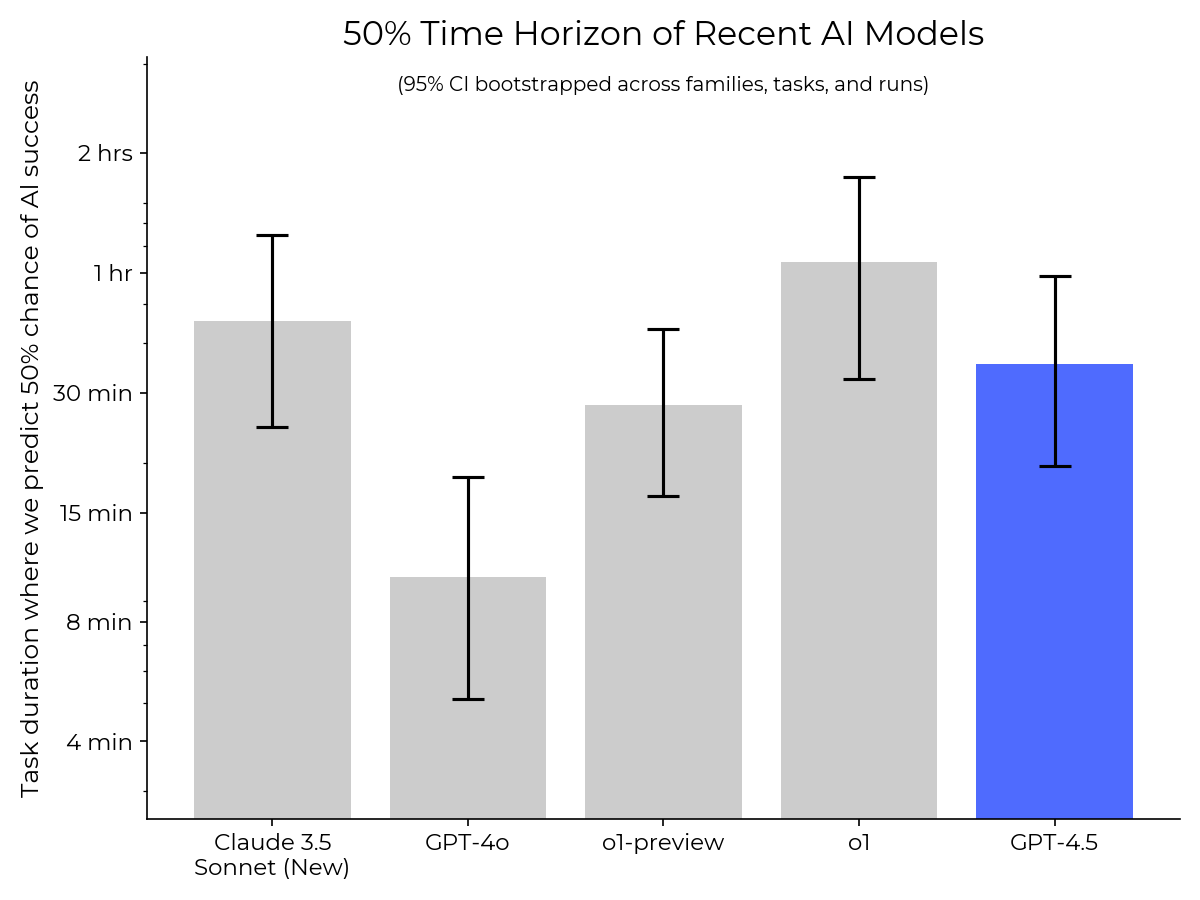

We evaluated DeepSeek-R1 for dangerous autonomous capabilities and found no evidence of dangerous capabilities beyond those of existing models such as Claude 3.5 Sonnet and GPT-4o. Interestingly, we found that it did not do substantially better than...