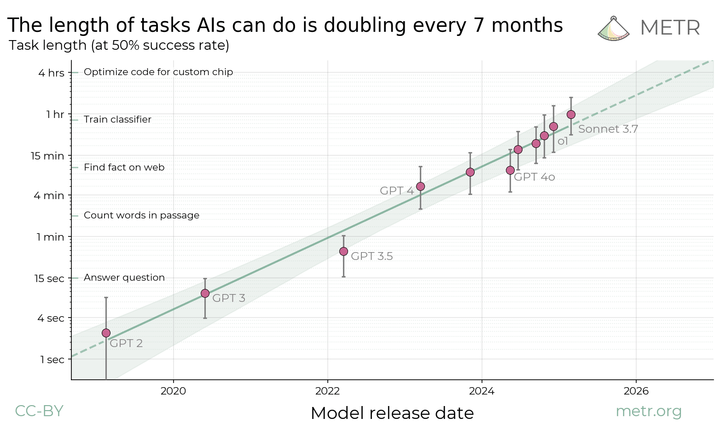

Details about METR's preliminary evaluation of OpenAI's o3 and o4-mini

METR conducted a preliminary evaluation of OpenAI's o3 and o4-mini. The two models displayed higher autonomous capabilities than other public models tested, and o3 appears somewhat prone to "reward hacking".