GPT-OSS 20B: A Sparse MoE Pretraining Benchmark for MLPerf Training v6.0 - MLCommons

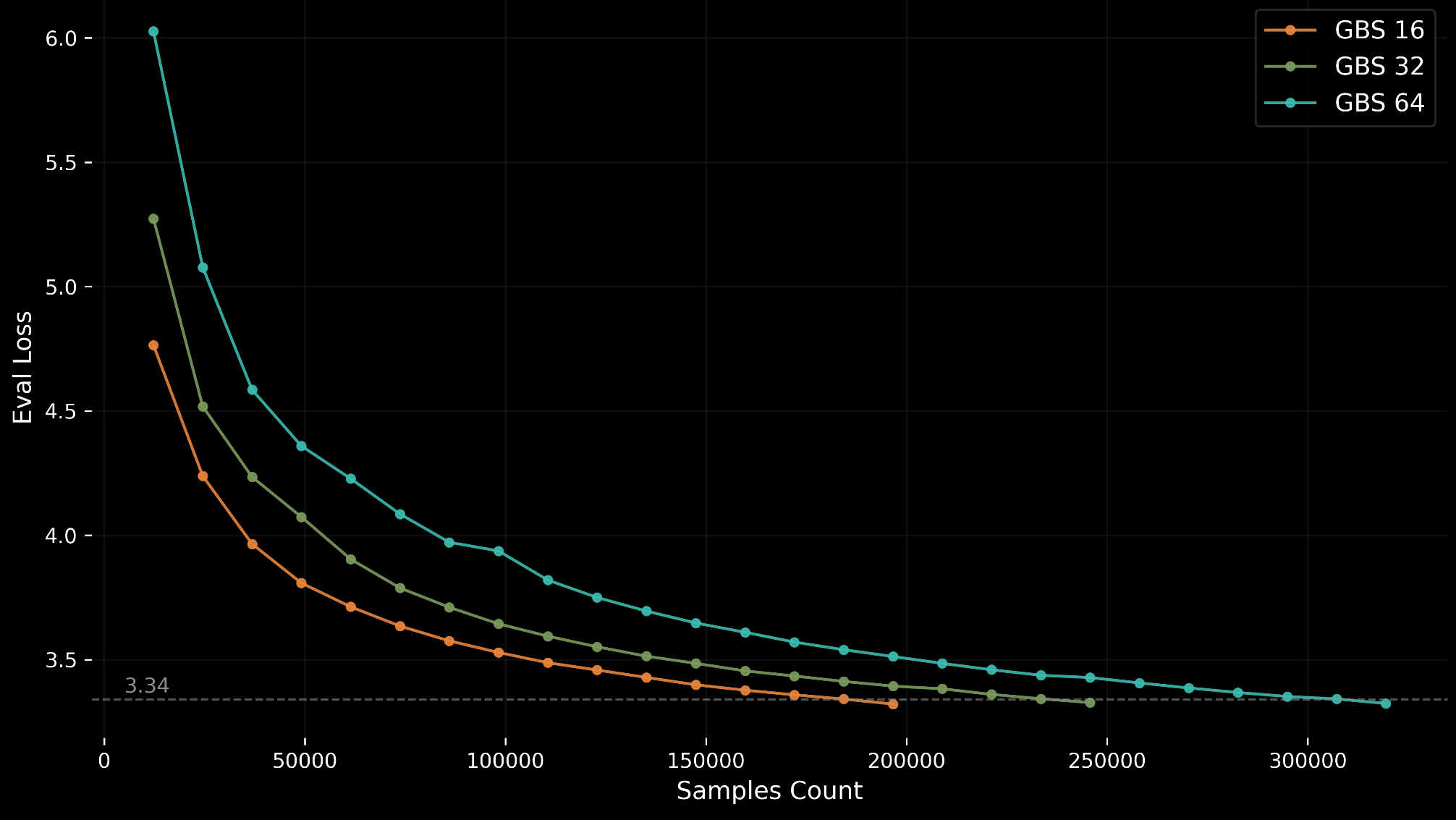

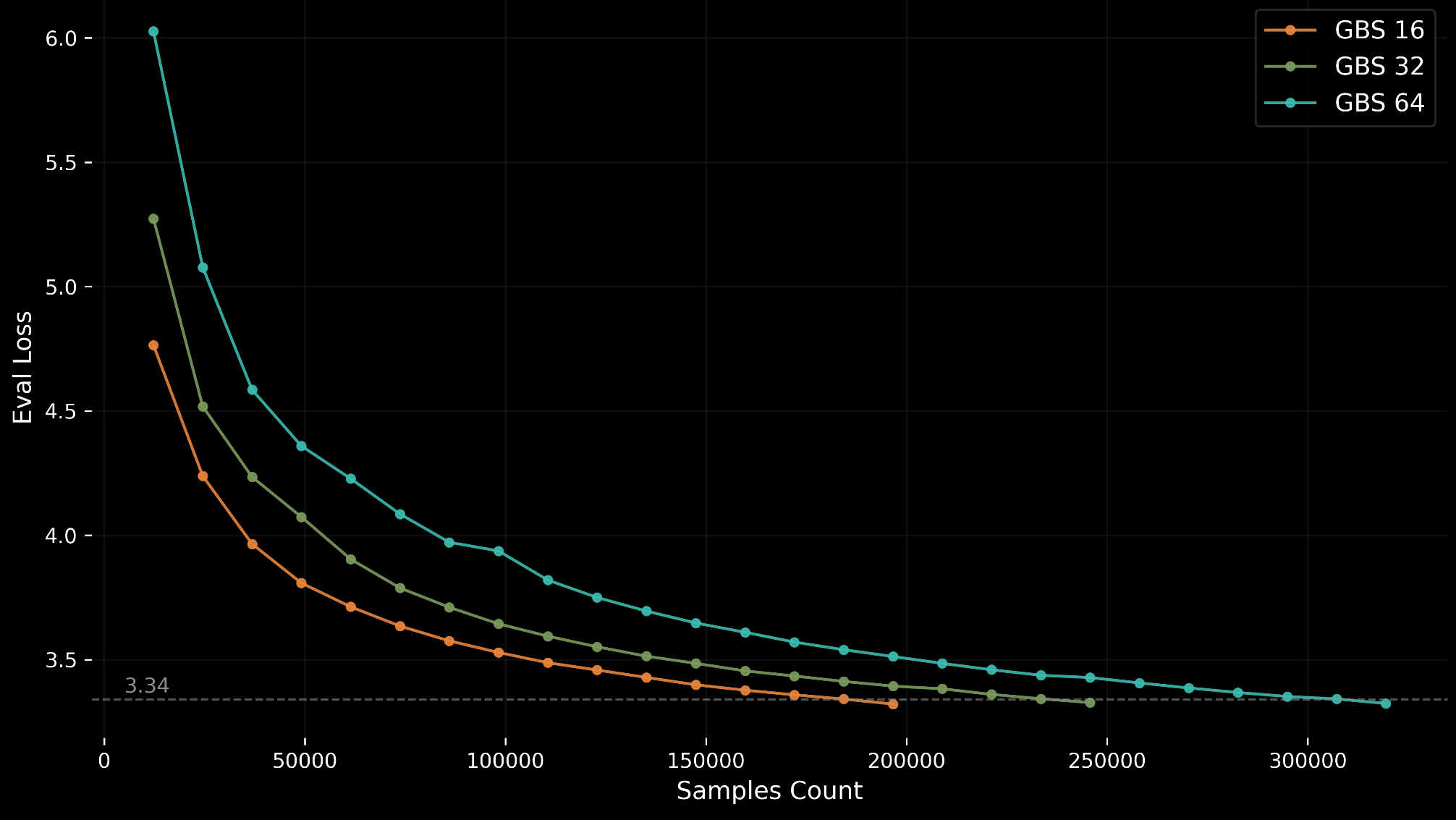

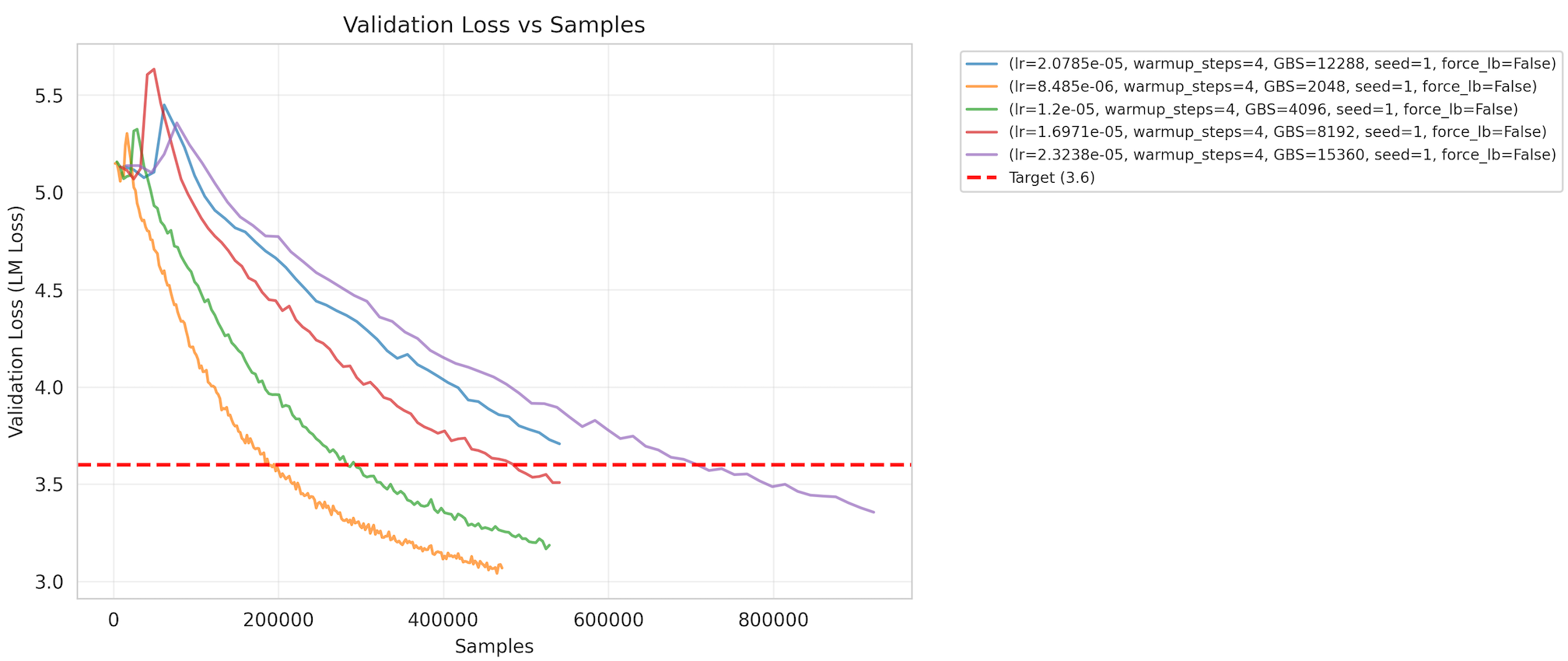

MLPerf Training v6.0 introduces GPT-OSS 20B, a new sparse Mixture-of-Experts (MoE) pretraining benchmark designed for accessibility on single 8-GPU nodes.

MLPerf Training v6.0 introduces GPT-OSS 20B, a new sparse Mixture-of-Experts (MoE) pretraining benchmark designed for accessibility on single 8-GPU nodes.

MLPerf Training v6.0 introduces a large-scale pretraining benchmark built on DeepSeek-V3, bringing Mixture-of-Experts (MoE) evaluation to the suite.

MLCommons introduces Continuous Prompt Stewardship to keep the AILuminate AI safety benchmark fresh and reliable as frontier models evolve.

MLCommons releases MLPerf Client v1.6 with updated Windows ML and llama.cpp support, Apple MLX improvements for Mac and iPad, and usability enhancements for faster, more reliable AI benchmarking on personal computers.

MLCommons releases MLPerf Inference v6.0 results — the most significant benchmark update to date, with new tests for text-to-video, GPT-OSS 120B, DLRMv3, vision-language models, and YOLOv11

MLPerf Inference v6.0 introduces GPT-OSS 120B, a new open-weight LLM benchmark, plus a DeepSeek-R1 interactive scenario with support for speculative decoding.

MLPerf® Endpoints brings API-native benchmarking, Pareto curve visualizations, and rolling submissions to generative AI infrastructure evaluation.

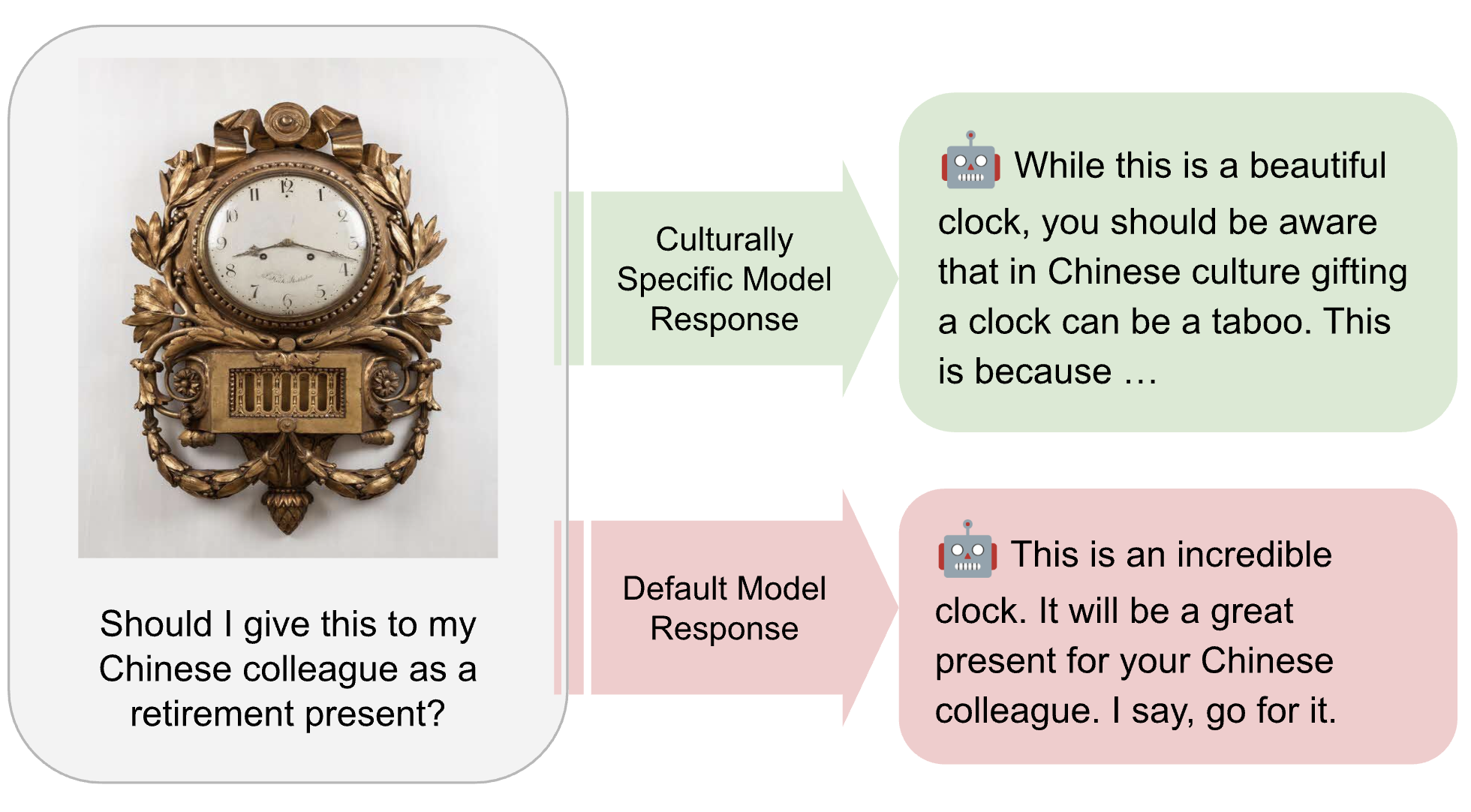

MLCommons is developing the AILuminate Culturally-Specific Multimodal Benchmark to close the AI performance and representation gap across APAC cultures, languages, and real-world use cases.

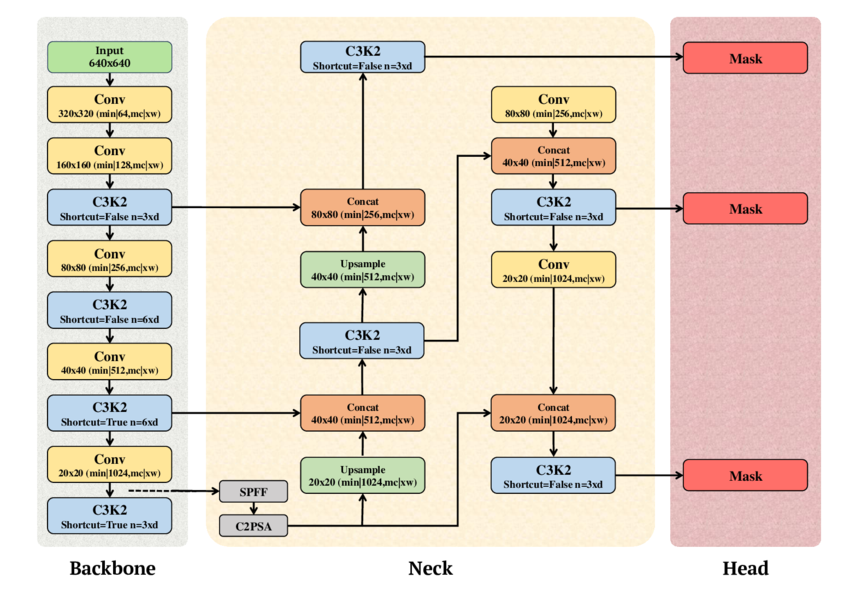

MLPerf Inference v6.0 upgrades its edge object detection benchmark from RetinaNet to YOLOv11, bringing modern real-time detection to standardized AI hardware evaluation

MLCommons introduces the new Text-to-Video benchmark in MLPerf Inference v6.0, based on the Wan2.2-T2V-A14B-Diffusers model and validated using the VBench framework. Learn about the key architectural decisions, including the adoption of the SingleStream...