DeepSeek-V3: A Large-Scale MoE Pretraining Benchmark for MLPerf Training v6.0 - MLCommons

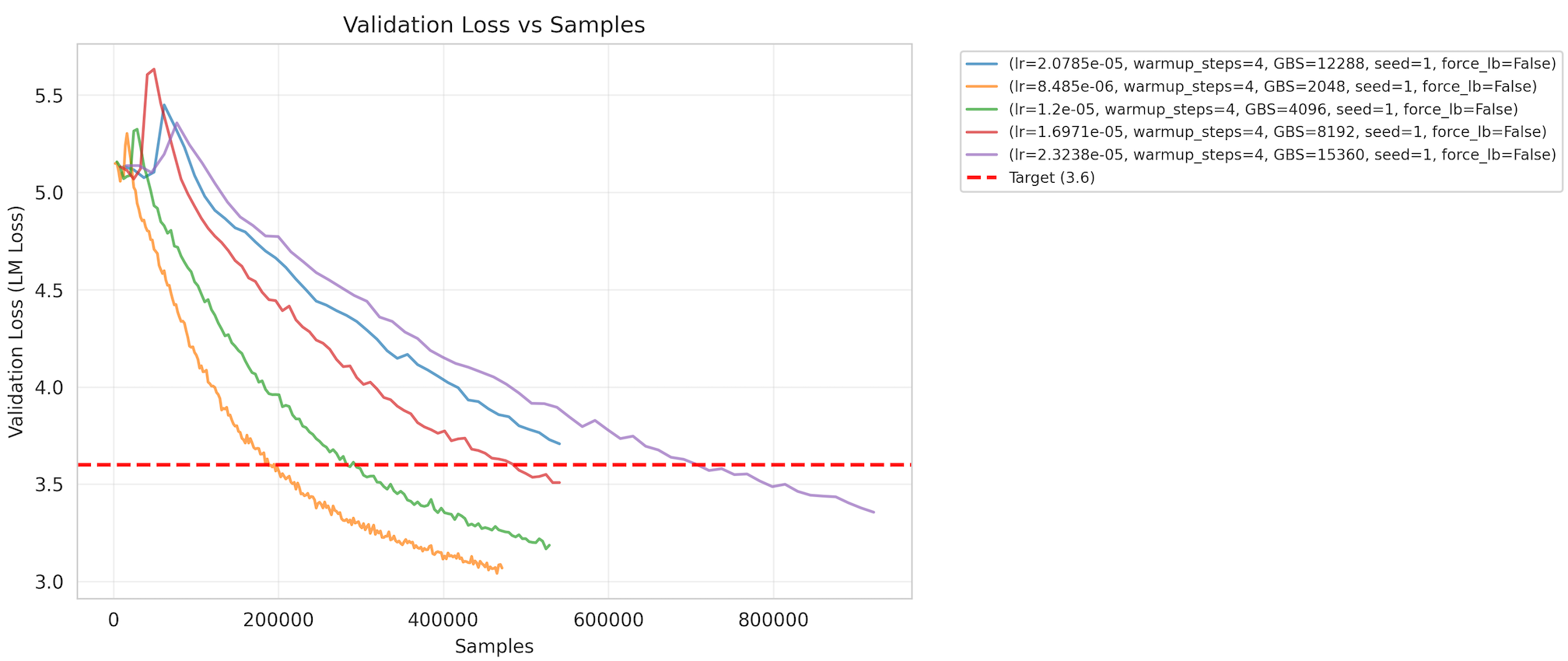

MLPerf Training v6.0 introduces a large-scale pretraining benchmark built on DeepSeek-V3, bringing Mixture-of-Experts (MoE) evaluation to the suite.

MLCommons · lori@mlcommons.org