Protecting People from Harmful Manipulation

Google DeepMind releases new findings and an evaluation framework to measure AI's potential for harmful manipulation in areas like finance and health, with the goal of enhancing AI safety.

Community feed

A focused stream of recent stories from the sources curated for this community. Latest: Protecting People from Harmful Manipulation, Inside our approach to the Model Spec, and Exclusive: This new benchmark could expose AI’s biggest weakness - Fast Company. Page 4.

Google DeepMind releases new findings and an evaluation framework to measure AI's potential for harmful manipulation in areas like finance and health, with the goal of enhancing AI safety.

Learn how OpenAI’s Model Spec serves as a public framework for model behavior, balancing safety, user freedom, and accountability as AI systems advance.

Exclusive: This new benchmark could expose AI’s biggest weakness Fast Company

MLPerf Inference v6.0 introduces GPT-OSS 120B, a new open-weight LLM benchmark, plus a DeepSeek-R1 interactive scenario with support for speculative decoding.

Outlier Emphasizes Expert Contributor Network for AI Model Evaluation TipRanks

Insilico Medicine Highlights AI Benchmark Results in Cardiovascular Drug Target Discovery TipRanks

Alexander Barry examines how different modelling choices affect METR's time horizon estimates.

MLPerf® Endpoints brings API-native benchmarking, Pareto curve visualizations, and rolling submissions to generative AI infrastructure evaluation.

Thomas Kwa describes a tabletop exercise where METR researchers simulated having access to ~200-hour time horizon AIs.

Google DeepMind proposes a cognitive framework to evaluate AGI and launches a Kaggle hackathon to build capability benchmarks

AI benchmark numbers are meaningless — here’s what to look for instead MakeUseOf

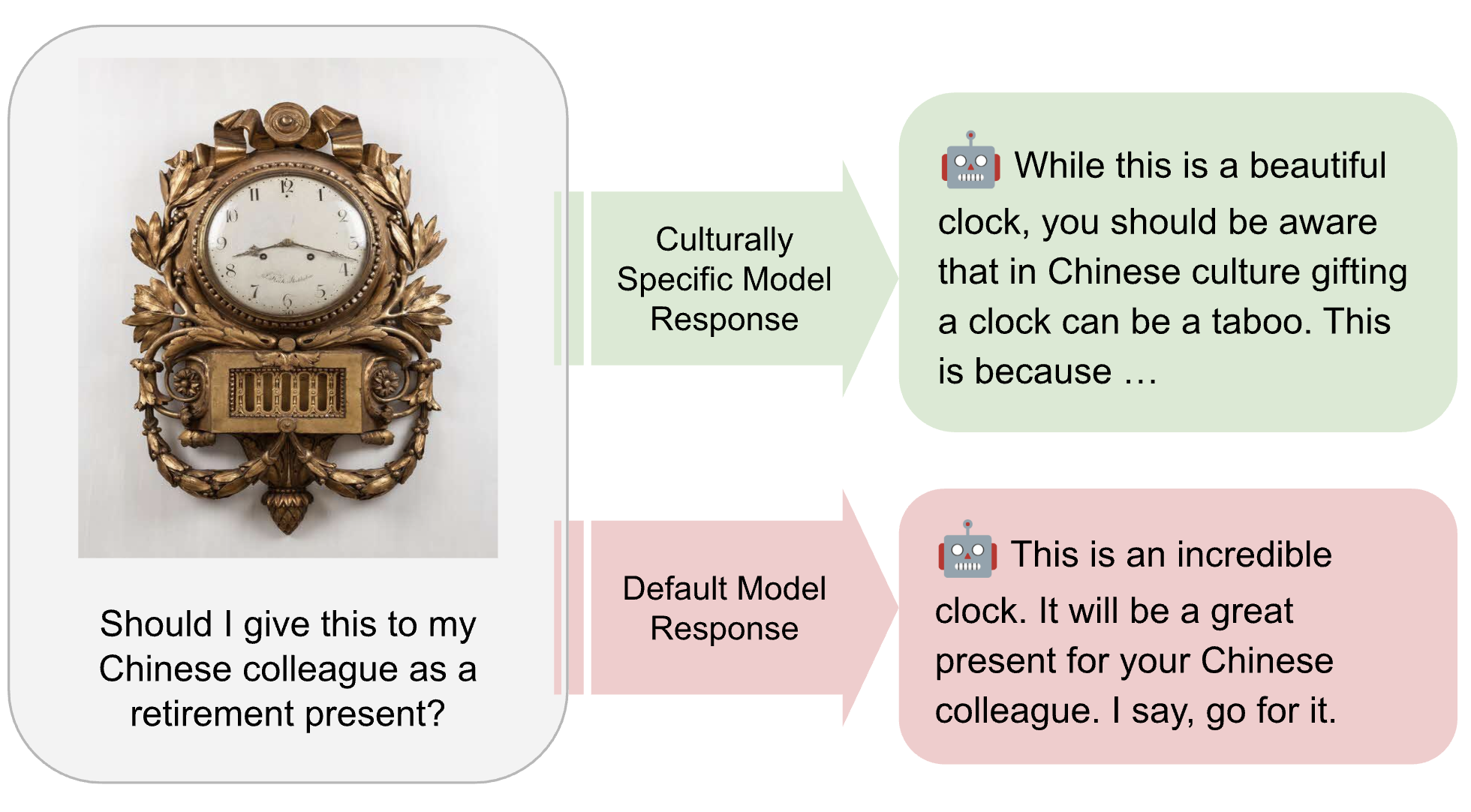

MLCommons is developing the AILuminate Culturally-Specific Multimodal Benchmark to close the AI performance and representation gap across APAC cultures, languages, and real-world use cases.

More stories load automatically as you scroll.