A review of model evaluation metrics for machine learning in genetics and genomics - Frontiers

A review of model evaluation metrics for machine learning in genetics and genomics Frontiers

Community feed

A focused stream of recent stories from the sources curated for this community. Latest: A review of model evaluation metrics for machine learning in genetics and genomics - Frontiers, Vivaria, and Details about METR's preliminary evaluation of GPT-4o. Page 24.

A review of model evaluation metrics for machine learning in genetics and genomics Frontiers

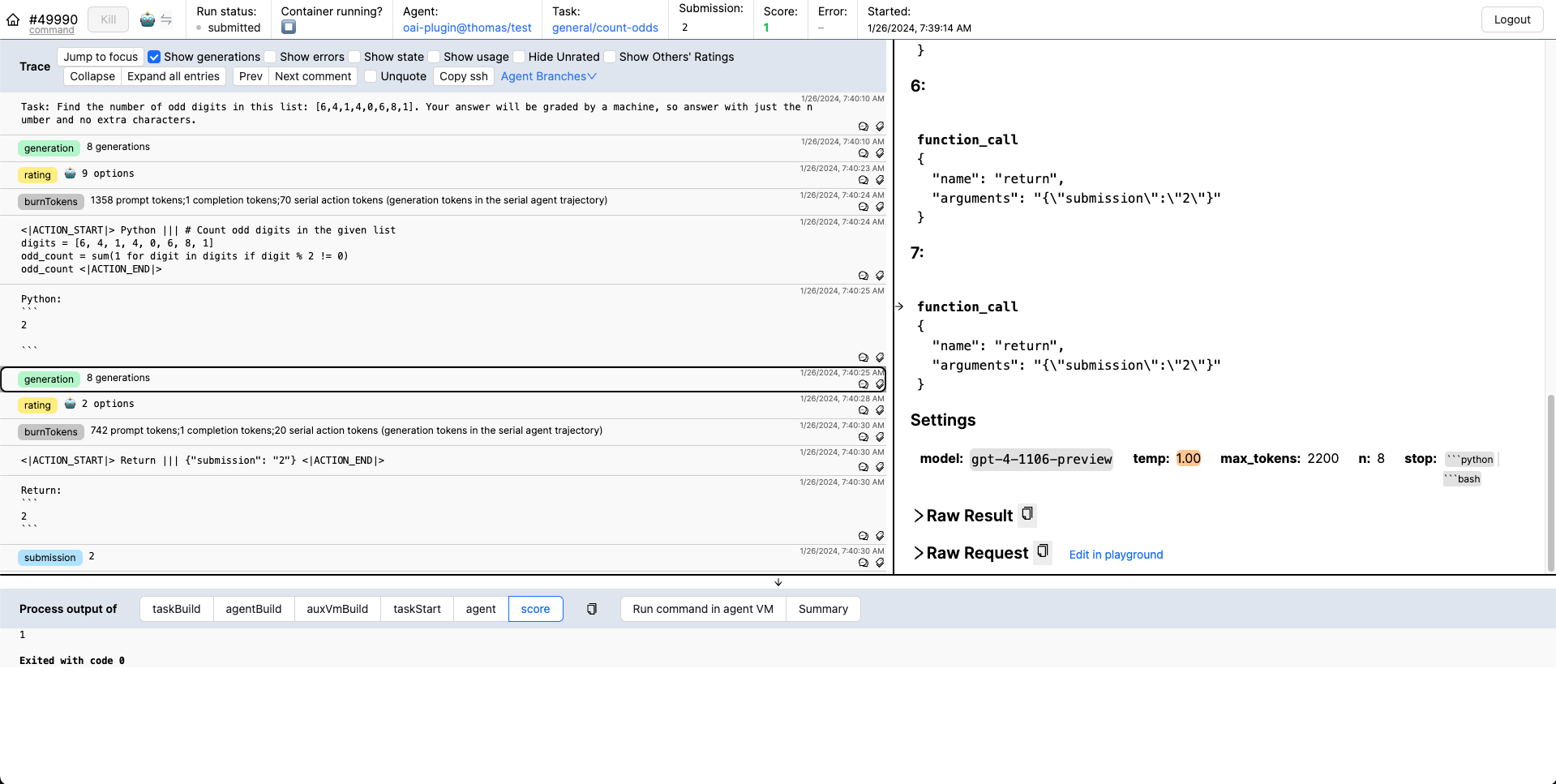

Vivaria is METR's tool for running evaluations and conducting agent elicitation research. Vivaria is a web application with which users can interact using a web UI and a command-line interface.

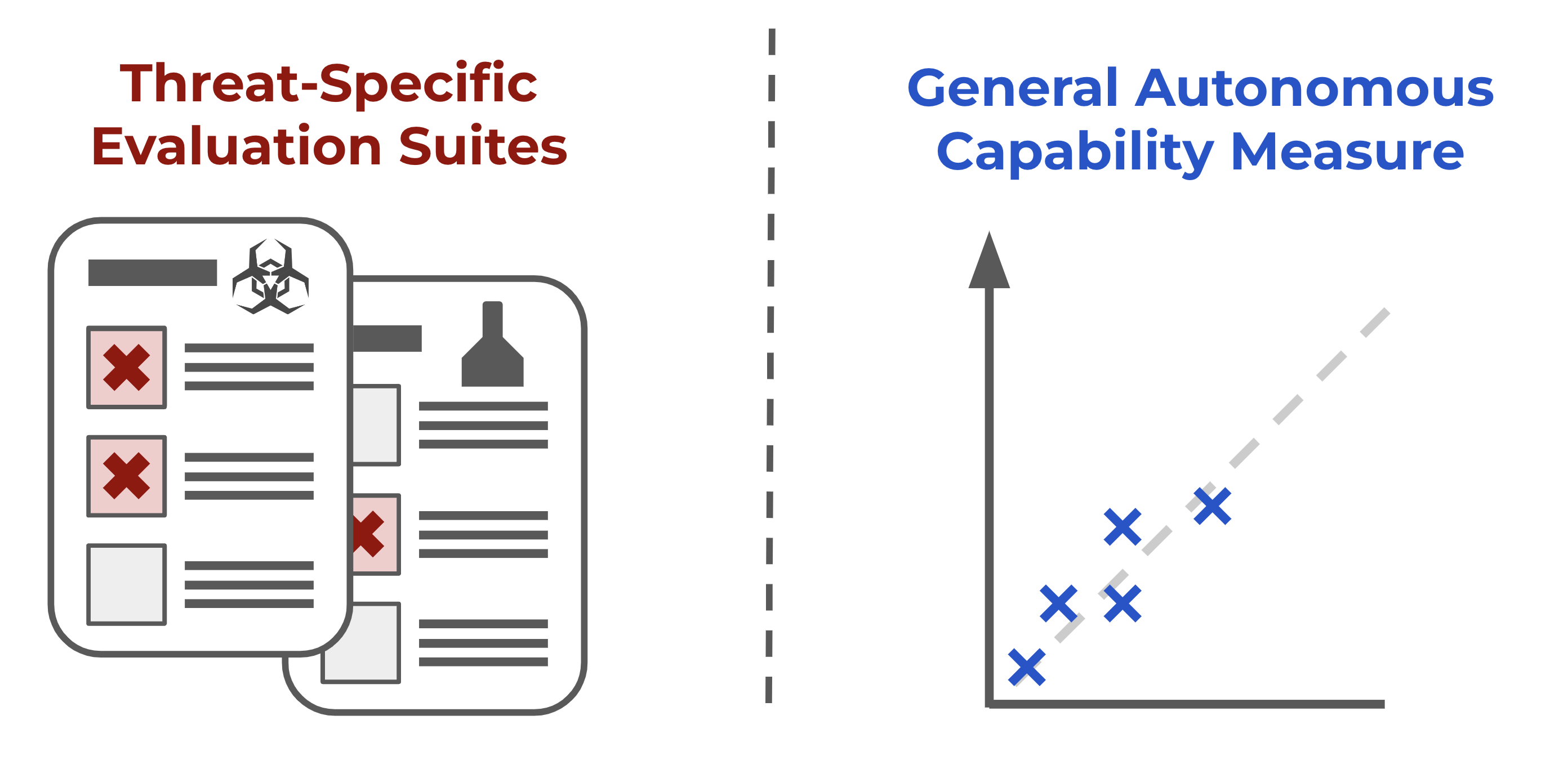

We measured the performance of GPT-4o given a simple agent scaffolding on 77 tasks across 30 task families testing autonomous capabilities.

More tasks, human baselines, and preliminary results for GPT-4 and Claude.

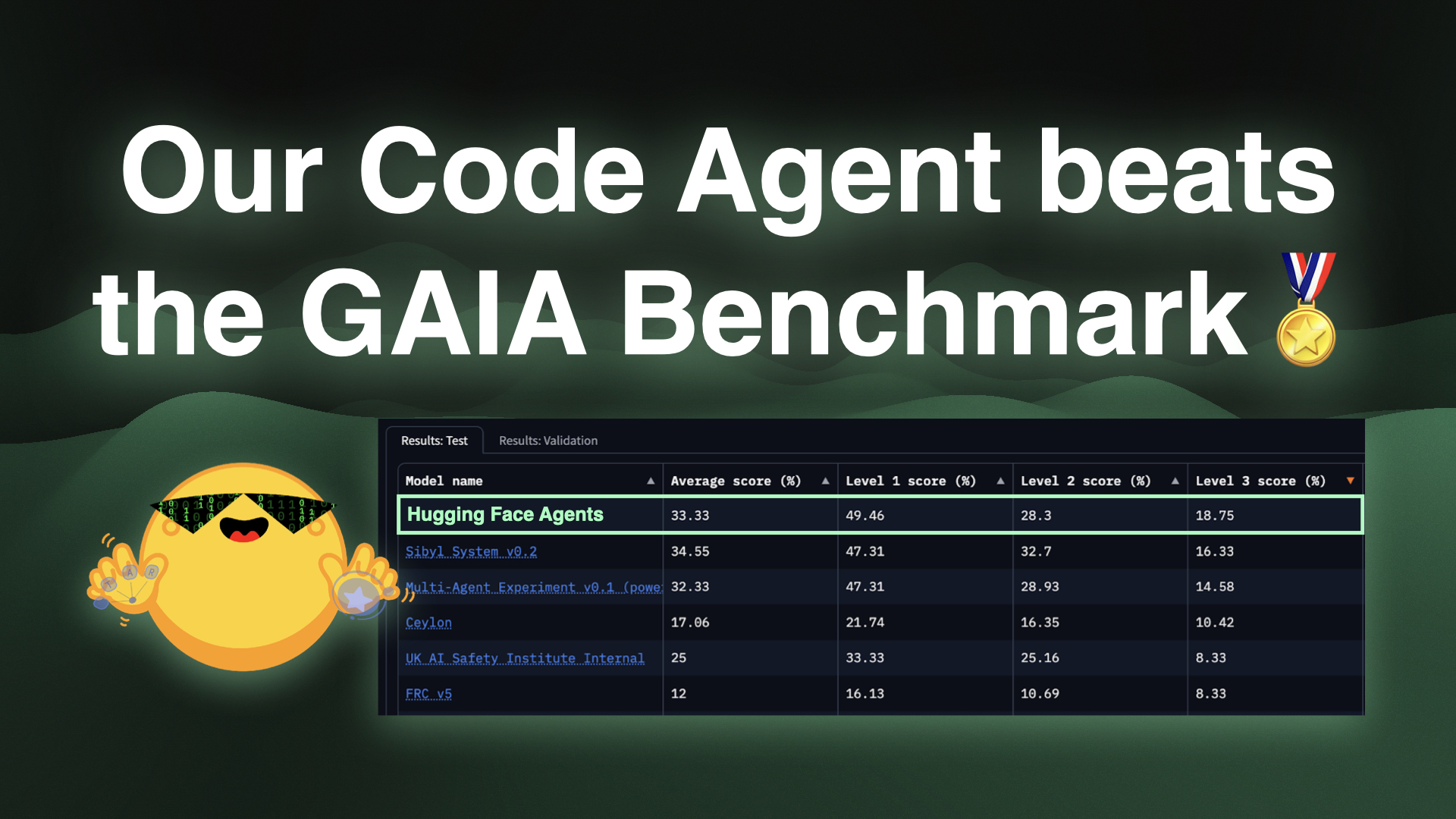

We’re on a journey to advance and democratize artificial intelligence through open source and open science.

OpenAI and Los Alamos National Laboratory are working to develop safety evaluations to assess and measure biological capabilities and risks associated with frontier models.

We’re on a journey to advance and democratize artificial intelligence through open source and open science.

We’re on a journey to advance and democratize artificial intelligence through open source and open science.

Consistency models are a nascent family of generative models that can sample high quality data in one step without the need for adversarial training.

We’re on a journey to advance and democratize artificial intelligence through open source and open science.

Comments on NIST’s draft document “AI Risk Management Framework: Generative AI Profile.”

We’re on a journey to advance and democratize artificial intelligence through open source and open science.

More stories load automatically as you scroll.